He should have.

That 2019 call marked one of the first major deepfake voice fraud cases, but it wouldn't be the last. What started as an isolated incident has evolved into a systematic hunting pattern, with fraudsters using AI voice cloning to target executives across industries with surgical precision.

The Anatomy of Executive Voice Hunting

The playbook is disturbingly consistent. Fraudsters don't target random employees or entry-level staff. They go straight for the C-suite, where voices carry authority and financial decisions happen fast.

When WPP CEO Mark Read was impersonated in November 2024, attackers used his YouTube footage to clone his voice for a Microsoft Teams meeting with an agency leader. The setup was sophisticated: a fake video call requesting both money and personal details from a subordinate who would naturally defer to the CEO's authority.

Meanwhile, Ferrari executives received WhatsApp messages and calls in July 2024 from someone perfectly mimicking CEO Benedetto Vigna's Southern Italian accent. The voice was so convincing that it took a specific verification question about a book recommendation to expose the fraud.

The pattern is clear: fraudsters are systematically harvesting executive voices from public appearances, earnings calls, and media interviews to create weapons-grade vocal deepfakes.

Why Executives Make Perfect Targets

The executive focus isn't coincidental. It's strategic.

First, executive voices are readily available. CEOs appear in quarterly earnings calls, conference presentations, and media interviews. Every public appearance becomes source material for voice cloning algorithms that now require as little as 20-30 seconds of audio.

Second, executive requests bypass normal approval processes. When the CEO calls requesting an urgent wire transfer, subordinates don't typically demand extensive verification. The hierarchy itself becomes the weapon.

Third, the financial stakes justify sophisticated attacks. The UK energy company lost $243,000 in a single call. For organized fraud groups, the ROI on executive voice cloning far exceeds mass phishing attempts.

The Escalation Timeline

What's most concerning is how rapidly these attacks are evolving. The 2019 energy company fraud required significant technical sophistication and planning. By 2024, fraudsters were conducting real-time video calls with multiple participants, all using cloned voices.

The Ferrari case reveals another troubling development: multi-channel attacks. Fraudsters didn't rely on phone calls alone but coordinated WhatsApp messages with voice calls, creating a more convincing narrative of urgency.

This progression suggests we're not seeing isolated incidents but the early stages of a systematic campaign against corporate leadership.

Beyond Financial Loss

While the immediate damage is financial, the long-term implications run deeper. Each successful attack erodes trust in voice communications entirely. How long before executives refuse phone calls without video verification? How many legitimate urgent requests will be delayed by necessary security protocols?

The Ferrari executive who caught the fraud did so through personal knowledge, asking about a book recommendation only the real CEO would know. But this defense doesn't scale. Not every executive has such personal touchstones with every colleague who might need to reach them urgently.

The Arms Race Reality

We're witnessing an arms race between AI-powered fraud and AI-powered detection. But detection lags behind generation by months or years. While companies scramble to implement voice authentication systems, fraudsters are already perfecting their next techniques.

The WPP attack's use of Microsoft Teams reveals another concerning trend: fraudsters are adapting to remote work technologies that executives have come to trust. Video calls, once considered more secure than voice calls, are now being weaponized through deepfake video technology.

Building Executive Defense Systems

The solution isn't just better detection technology. It's creating unforgeable proof of where executives actually are and what they're actually doing.

When the Ferrari CEO's voice was cloned, there was no way to verify his actual location or activities at that moment. Was he really making calls to subordinates about urgent financial matters? Or was he in a completely different meeting, unaware that his voice was being used fraudulently?

This is where the AI Defense Suite becomes critical for executive protection. Agent Safe provides real-time protection against voice cloning attacks across all messaging platforms, detecting and blocking fraudulent communications before they reach their targets. When suspicious calls or messages claim to be from executives, Agent Safe's security protocols can flag them for verification.

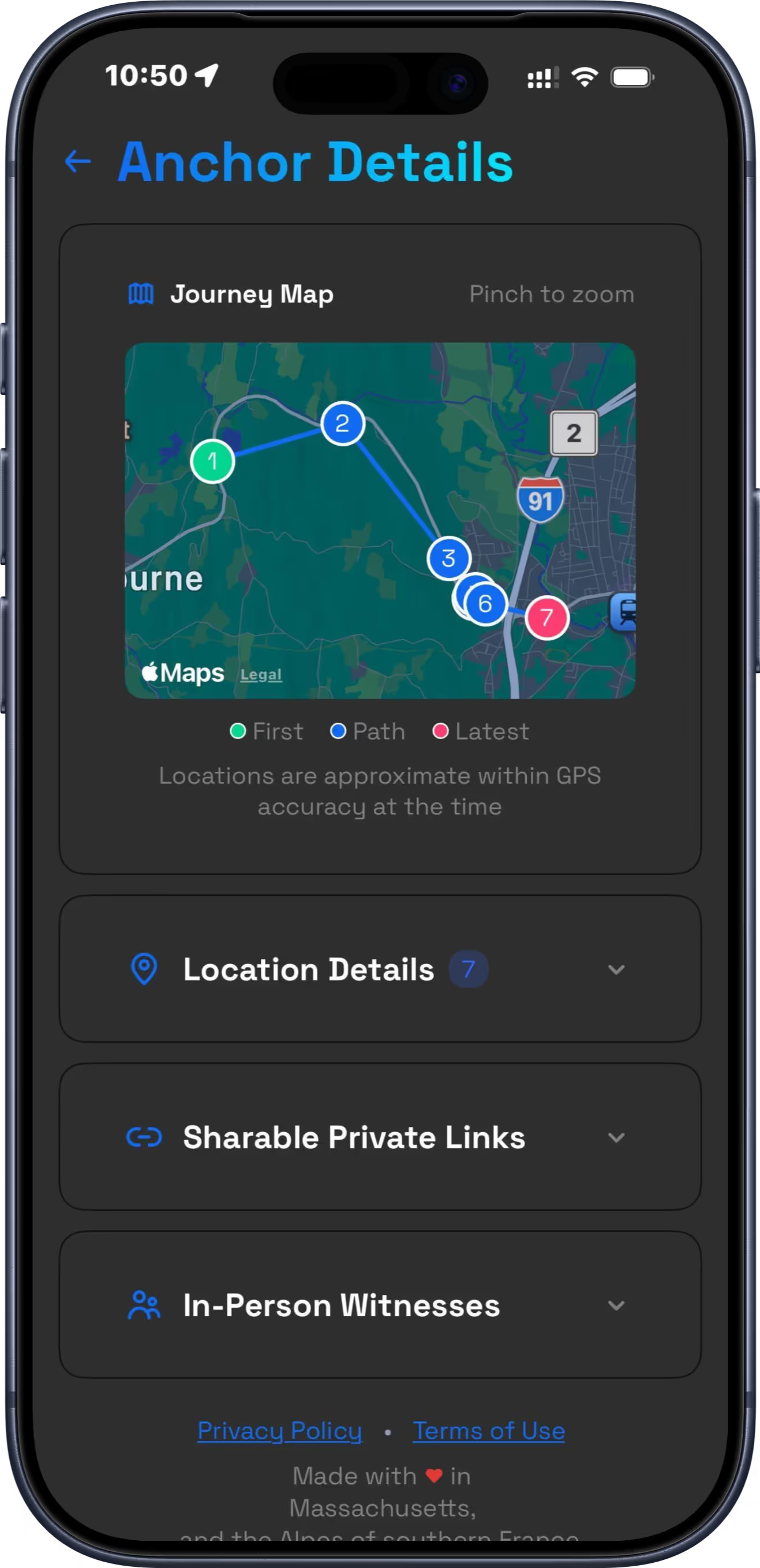

Meanwhile, Location Ledger creates permanent, timestamped records of where executives actually are, making fraudulent calls much harder to execute successfully. A cloned voice might sound perfect, but it can't change the fact that the real executive was verifiably in Tokyo while the fraudulent call claimed they were calling from London.

The evidence suggests executive voice hunting will only intensify. The targets are lucrative, the source material is abundant, and the technology keeps improving. Companies need defenses that go beyond hoping their executives will ask the right verification questions.