In February 2024, a finance worker at engineering giant Arup joined what appeared to be a routine video conference with the company's CFO and several colleagues. The meeting seemed legitimate. Familiar faces, regular agenda, urgent financial matters requiring attention. The employee authorized transfers totaling $25 million to Hong Kong accounts. Every person on that call was a deepfake. The meeting never happened.

While headlines continue to focus on the corporate implications—Arup is not alone—the real story lies in what's coming for ordinary people. If AI can convincingly place someone anywhere digitally, you need proof on infrastructure that verifies you're actually human.

The Technology Is Already Here

Deepfakes (AI-generated synthetic media that convincingly real people) are no longer the domain of sophisticated state actors or well-funded criminal enterprises. The technology has democratized. Voice cloning now requires just 10-30 seconds of audio. Convincing video deepfakes can be created in 45 minutes using freely available software. Regina-based security firm Digital Shadows has estimated deepfakes can now be created from $100 to $300 for 60-90 seconds, easily within reach of malicious actors.

IARPA, a US government body, has concluded a "highly realistic reality" point—a point beyond which humans can no longer distinguish authentic media from AI-created fake content—may be arriving. Experts say this may already have passed.

The numbers tell a story. According to the World Economic Forum, there were a 900% increase in deepfake fraud attempts in 2024. McKinsey reports that 93% of security experts expect deepfake attacks to "significantly impact" their organizations. But until recently, most deepfake discussion has centered on institutional threats—corporate espionage, election interference, celebrity exploitation. The personal threat—the spectre of deepfakes and AI-generated false accusations against you, the private citizen, in the coming years—has received far less attention.

Three Real Threats to Your Life

Location-Based False Accusations

The deepfake threat extends far beyond fabricated videos. AI can now generate convincing evidence placing you at locations you've never visited. An AI can place you anywhere digitally—you need proof on infrastructure that verifies you're actually human.

Imagine synthetic evidence suggests you were at the scene of a crime, committed workplace violations at a location, fabricated business meetings never attended. Consider the implications: a jealous ex-partner creates deepfake evidence of you at a location that never occurred. A business rival generates synthetic proof of regulatory violations. A malicious actor places you at the scene of something that happened.

Here's the real problem: How do you prove you weren't somewhere? Traditional alibi methods are increasingly inadequate. Eyewitness testimony is unreliable. Digital records can be questioned. Cell tower data shows approximate areas, not precise locations. Credit card receipts might have been made by another hand.

Reputational Destruction

In January 2024, Eric Brown, then principal of Pikesville High School in Maryland, was targeted by the school's athletics director Dazhon Darien, who created a fake audio recording that made Brown appear to make racist and antisemitic comments. The synthetic audio spread across social media media and made national news. The damage was immediate and severe: Brown was placed on administrative leave, received death threats, and watched his professional reputation crumble—all from an AI-generated audio that cloned his voice.

This audio was eventually exposed and Darien was arrested, but Brown's case illustrates a troubling pattern. In the gap between a deepfake's release and its debunking—if debunking ever comes—careers end, relationships dissolve, and reputations suffer irreparable harm. The burden of proof effectively shifts to the victim, who must now prove a negative: that they never said or did what synthetic evidence claims.

Financial Manipulation

While the Arup case received corporate scale media attention, early emerging scams using AI-cloned voices and deepfake personas to target individuals. Financial advisors warn of scenarios where voice-cloned calls from "family members" request emergency transfers, deepfake video calls impersonate trusted advisors, and AI-generated communications authorize transactions never intended.

In July 2024, Ferrari narrowly avoided a sophisticated fake when an executive received WhatsApp messages and voice clips that sounded exactly like CEO Benedetto Vigna, complete with his distinctive Southern Italian accent. The fake CEO discussed a confidential acquisition requiring immediate financial action. Only when the executive asked a question about a recent book they'd discussed did the impersonator—working from AI-generated voice clips—falter. Ferrari confirmed the near-miss publicly; most companies would not.

What You Can Do Right Now

Current detection technology exists but has serious limitations. Tools like FakeCatcher and Microsoft Video Authenticator use machine learning to analyze media for manipulation, but they require access to original files, analysis expertise, and time—luxuries rarely available when synthetic media is spreading across social platforms in real-time.

Instead, the emerging consensus among security researchers is that detection must transition from after-the-fact analysis to proactive verification. Rather than trying to prove a fake is fake, prove your authentic activities are authentic—before any question arises.

Implement Callback Procedures. If someone claiming to be your spouse calls asking for a transfer to unfamiliar accounts, call them back on their known phone number. If your "boss" emails asking for gift cards, verify through established channels. This simple habit defeats most current voice-clone attacks.

Control Your Digital Presence. Every video, image, audio recording, even usage patterns like search history and social media activity—is training data for models that could be used against you. Consider what you share. Limit public audio and video when possible. Review privacy settings across platforms. Monitor your digital presence regularly for signs of synthetic impersonation.

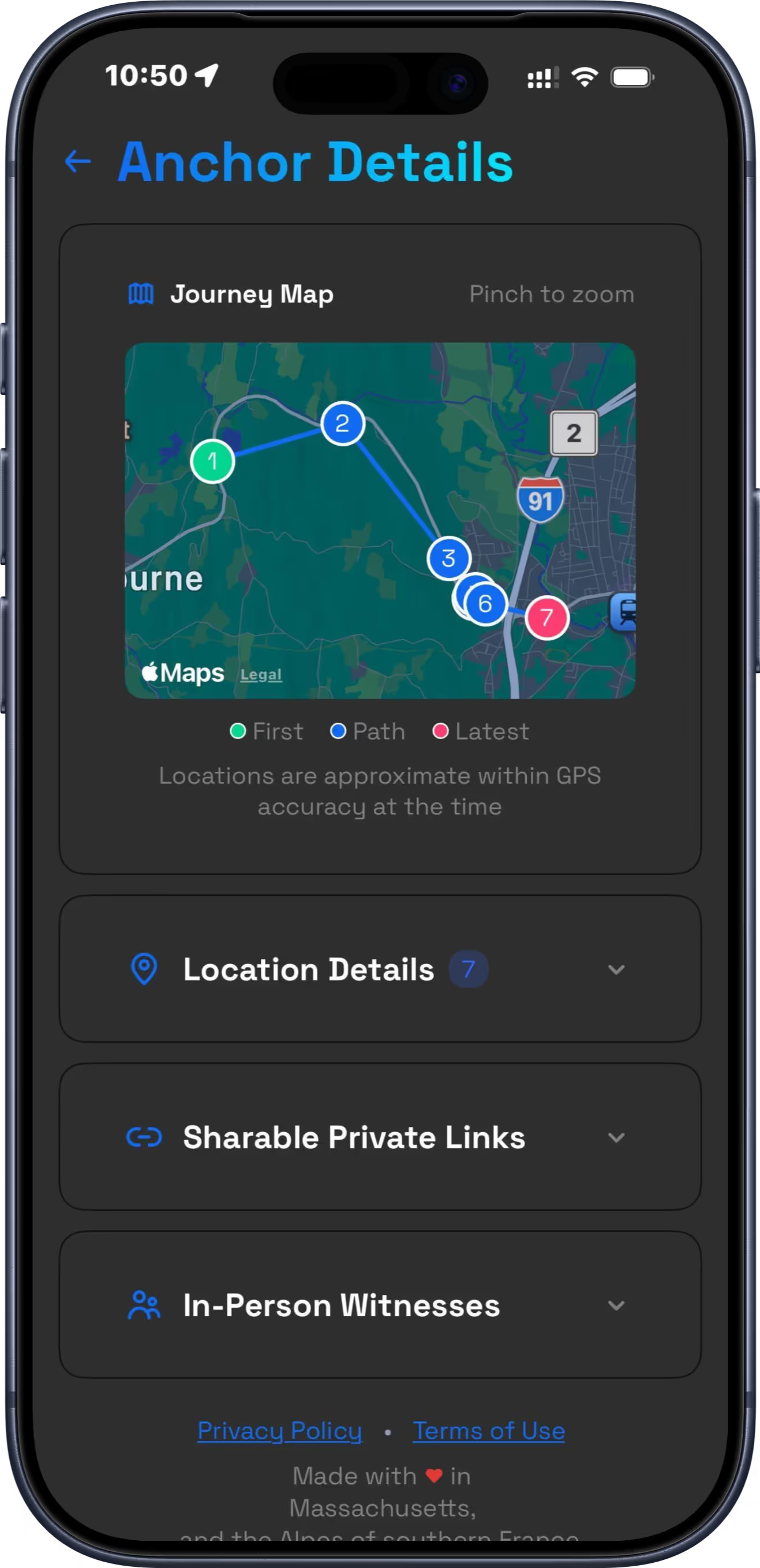

Build Your Location Record. In an era where seeing is no longer believing, truth itself needs proof.

Preparing for What's Next

The technology continues advancing. Experts predict real-time deepfake video calls (where AI can generate convincing responses in live conversation) will arrive widely before 2028. The entire infrastructure of trust—from how we verify identity to how we establish alibi—will require fundamental rethinking.

But solutions are arriving, too. The European Union's AI Act, which entered force in August 2024, mandates transparency for AI-generated content. Multiple U.S. states have enacted or are considering deepfake-specific legislation. The Biden administration has signaled interest in federal action.

The key is shifting your mindset from "I'll respond to a deepfake issue when I encounter one" to prevention. Recovery is not guaranteed. Take these steps now:

- Establish verification protocols (code words, callback procedures, verification questions).

- Enable verification protocols (do this and, critically, verification questions).

- Control your digital presence (limit public audio/video where feasible).

- Monitor your digital presence (Google Alerts, social media searches).

- Understand what legal protections (and gaps) exist in your jurisdiction.

- Consider proactive location verification solutions.

The Bottom Line

This isn't paranoia. It's preparedness. The threats in this article aren't hypothetical. They're real incidents that happened to real people in the past twelve months. The $25 million Arup fraud. The Maryland principal whose career was upended. The Ferrari executive who happened to ask the right question at the right moment.

The good news? You're not powerless. While the technology to create convincing fakes has democratized, so have the tools to protect yourself. The solution involves habits (verification protocols), awareness (controlling and monitoring your digital presence), and technology (proactive location verification and other emerging solutions).

In an era where seeing is no longer believing, truth needs proof. The question isn't whether deepfakes will affect your life. It's whether you'll be prepared when they do.

Stay up for early Alibi today and own your location data.