The Anatomy of a $35 Million Deepfake Heist

According to Surfshark's research, a sophisticated scam operation based in Tbilisi, Georgia orchestrated one of the largest deepfake fraud schemes to date. The perpetrators didn't just rely on fake celebrity videos. They built an entire ecosystem of deception.

Call center agents received AI-powered persuasion training. Victims saw fraudulent trading dashboards showing fake profits. And at the center of it all were deepfake videos of celebrities endorsing investment opportunities that didn't exist.

The scale is staggering: 6,000 victims across multiple countries, with individual losses ranging from hundreds to tens of thousands of dollars.

Why Celebrity Deepfakes Work So Well

Celebrity endorsements carry enormous psychological weight. When someone sees Elon Musk or a trusted financial guru apparently recommending an investment, skepticism takes a back seat to excitement.

Deepfake technology has reached a tipping point. What once required Hollywood-level resources now runs on consumer hardware. A scammer with basic technical skills can create convincing celebrity videos in hours, not weeks.

The Georgia operation exploited this perfectly. They created fake endorsements from recognizable faces, then used legitimate-looking websites and professional call centers to close the deal.

The Verification Gap That Scammers Exploit

Here's the fundamental problem: there's no reliable way to verify when and where most digital content was created. That celebrity endorsement video could have been filmed yesterday or generated by AI this morning. Without verification, everything becomes a matter of trust.

Scammers understand this gap and weaponize it. They create content that looks legitimate because there's no easy way for victims to check authenticity. By the time people realize they've been fooled, the money is gone.

This isn't just about celebrity endorsements. The same verification gap affects:

- Business communications from "executives"

- News clips from "reporters" in conflict zones

- Product testimonials from "satisfied customers"

- Emergency calls from "family members"

Building a Chain of Proof

Authentic content needs verifiable creation history. When was this created? Where? By whom? These questions should have clear, tamper-proof answers.

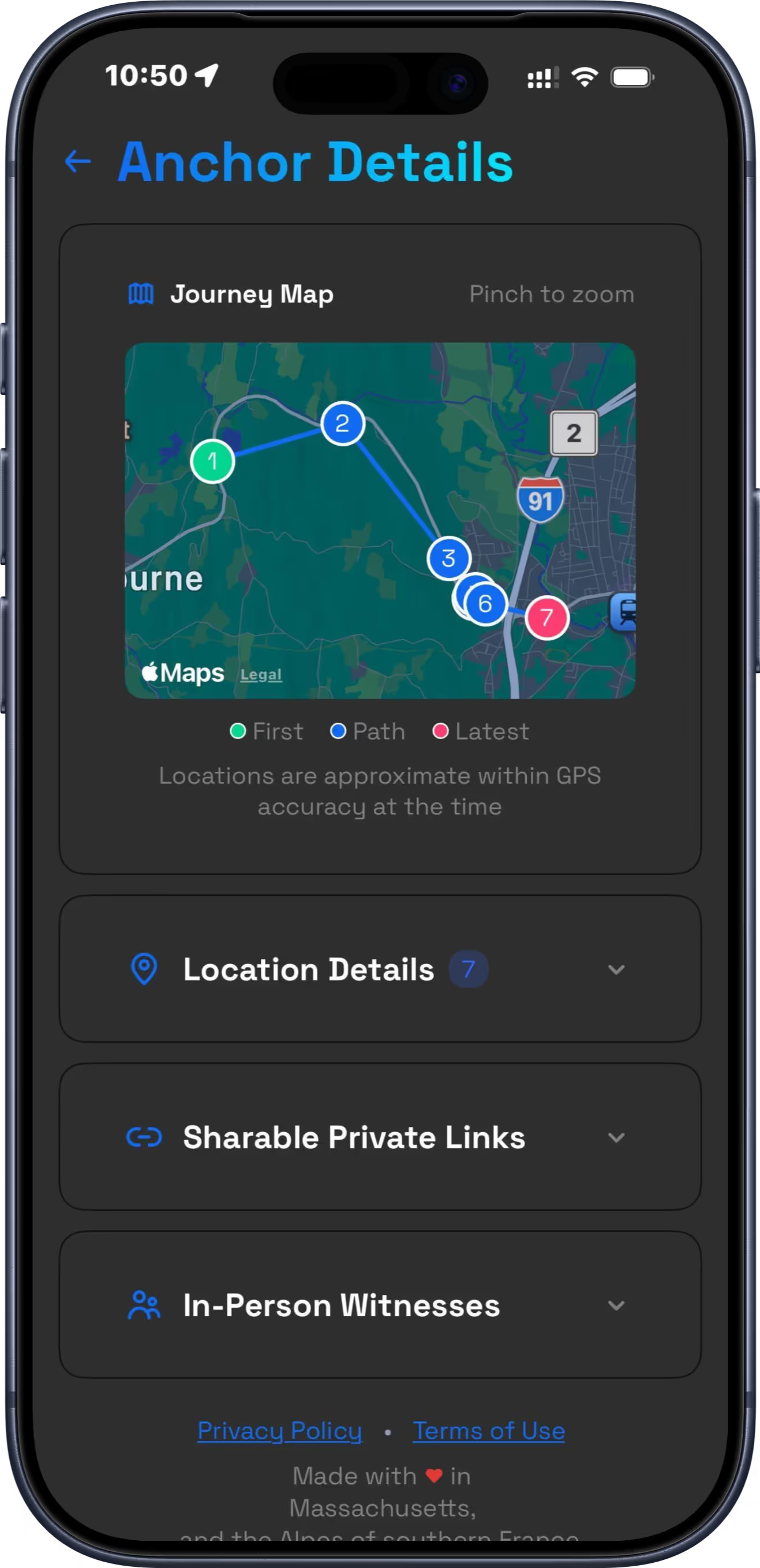

The AI Defense Suite provides comprehensive protection against deepfake fraud through multiple verification layers. Proof of Life creates tamper-proof documentation of authentic human presence through biometric-verified "Proofies" that prove a real person (not AI) took the photo. Every image gets anchored to blockchain with an immutable timestamp that cannot be altered.

Location Ledger complements this by creating continuous verification of your whereabouts. Every 15 minutes, your position gets encrypted and stored on your device. Daily, that data gets anchored to World Chain, creating a permanent record that proves where you were when content was created.

Real Protection for Real People

The Georgia scam succeeded because victims couldn't verify what they were seeing. They trusted deepfaked celebrities and lost everything.

With Proof of Life and Location Ledger:

- Your authentic presence is biometrically verified with Face ID or Touch ID proving you're real

- Your whereabouts are continuously documented with tamper-proof timestamps

- Photo origins are anchored to specific times, locations, and biometric verification

- Witness attestation lets people who were with you digitally verify shared experiences

- Export capabilities generate court-ready reports when you need to prove authenticity

If you're a business owner, this protects against accusations of misconduct. If you're traveling, it creates an unshakeable alibi. If you're creating content, it proves authenticity and human origin.

The Three-Second Test

Before trusting any online content, especially investment opportunities, ask these questions:

- Can I verify when and where this was created?

- Is there independent confirmation from official sources?

- Am I being pressured to act immediately?

The Georgia scammers failed all three tests. Their deepfakes had no verifiable origin. Celebrity representatives never confirmed the endorsements. And victims were pushed to invest "before the opportunity disappeared."

Why This Will Get Worse Before It Gets Better

Deepfake quality improves monthly while detection lags behind. The tools are becoming more accessible and the targets more valuable. Celebrity endorsements are just the beginning.

We're heading toward a world where any video, audio clip, or image could be synthetic. In that environment, the ability to prove authenticity becomes invaluable.

Your Digital Defense Strategy

The best defense against deepfake fraud is building a verifiable record of your real activities and authentic human presence. When everything can be faked, documented authenticity becomes your shield.

Start creating that record today. Your future self might need to prove where you were, what you said, or that you're a real human creating real content. The AI Defense Suite ensures you have unshakeable evidence when you need it most.