In May 2024, British engineering giant Arup became the latest victim of sophisticated AI deepfake fraud. An employee in Hong Kong received what appeared to be a legitimate video call from the company's UK-based CFO and other senior executives. The "executives" requested 15 urgent wire transfers totaling HK$200 million ($25 million USD) to five Hong Kong bank accounts.

The employee, seeing familiar faces and hearing familiar voices on the video call, authorized the transfers. Only later did Arup discover that every person on that call was an AI-generated deepfake.

The Perfect Storm of Modern Fraud

This wasn't a simple email scam or voice spoofing. The criminals had created convincing video deepfakes of multiple Arup executives, complete with their appearance, mannerisms, and voices. They conducted a live video conference that felt completely authentic to the victim.

The sophistication is staggering, but the underlying vulnerability is surprisingly simple: there was no way to verify that these executives were actually where they claimed to be, or that they were even real humans rather than AI-generated imposters.

When "Seeing Is Believing" Isn't Enough

Traditional verification methods failed completely. The employee could see the executives, hear their voices, and watch them interact naturally on the call. Every visual and audio cue suggested authenticity.

But here's what the deepfakes couldn't fake: the actual physical location of the real executives and proof that real humans were making the request. While AI can replicate appearance and voice, it cannot place a person in a specific geographic location at a specific time, nor can it pass biometric authentication that proves a real human is present.

Multi-Layered Defense Against AI Fraud

Imagine if Arup had implemented comprehensive AI defense protocols from the AI Defense Suite. Before authorizing large transfers, they could verify multiple factors that AI cannot fake.

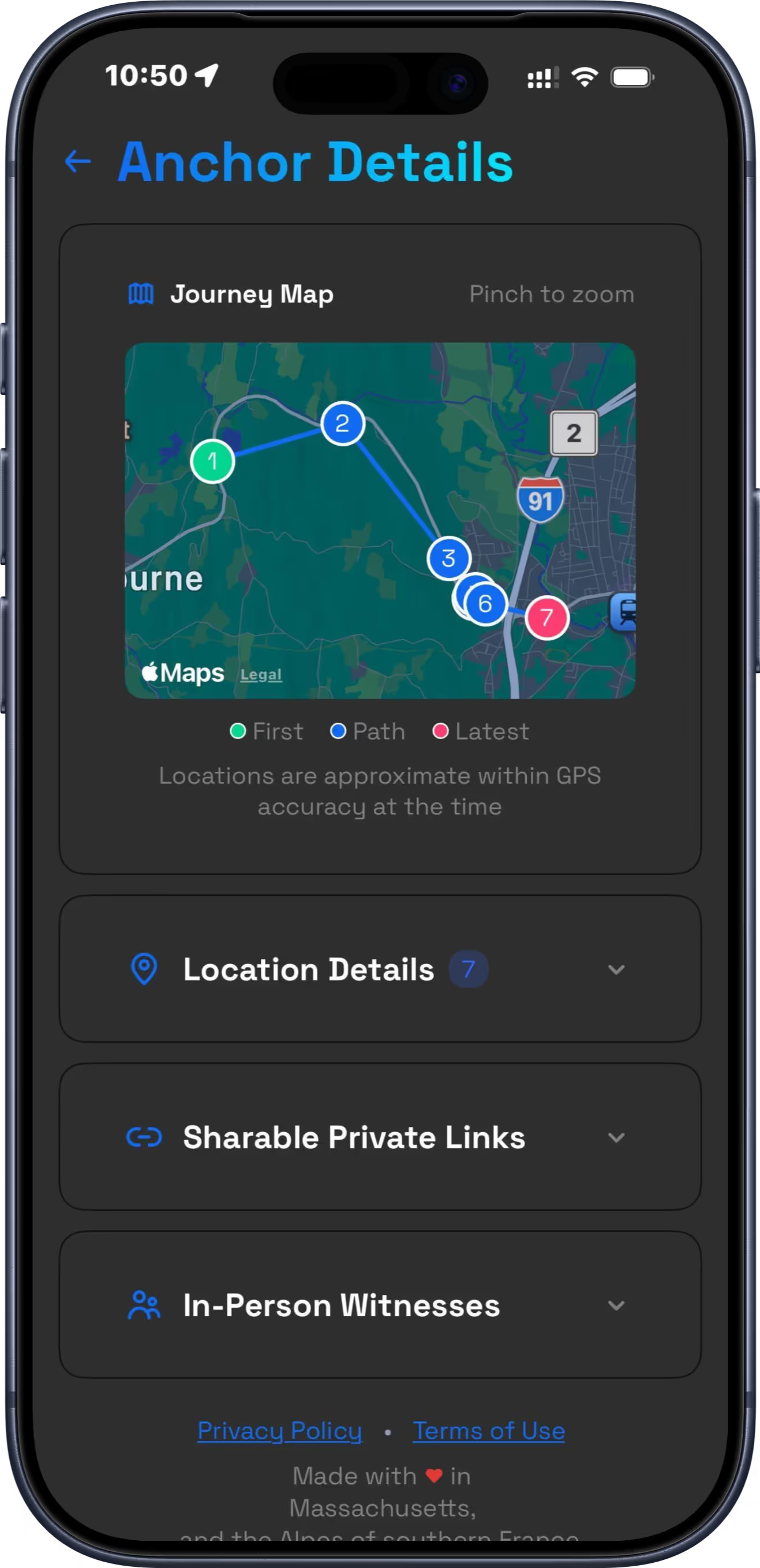

First, location verification through continuous GPS tracking would show that the "CFO" claiming to call from London was actually nowhere near the UK that day. When location history is encrypted and anchored to blockchain daily, it creates an unbreakable chain of proof about where executives actually were.

Second, biometric-verified identity confirmation could require executives to take authenticated selfies (called "Proofies") using Face ID or Touch ID before high-stakes calls. These biometric verifications prove a real human took the photo and provide tamper-proof timestamps, something no deepfake can replicate.

Third, AI-powered communication security could analyze the unusual request patterns, urgent language, and financial transfer demands that are hallmarks of business email compromise and social engineering attacks.

No deepfake can replicate your physical presence, pass biometric authentication, or fool advanced fraud detection simultaneously. Your real-world proof becomes your defense against AI impersonation.

Beyond Individual Protection

While we often think about AI defense for personal protection against false accusations, the Arup case demonstrates its crucial role in corporate security. As deepfakes become more sophisticated and accessible, companies need new ways to verify the authenticity of their communications and protect against sophisticated social engineering.

Multi-factor verification combining location proof, biometric authentication, and AI-powered fraud detection could become standard components of corporate security, especially for high-stakes financial decisions. When someone claims to be calling from the London office, their location history, biometric verification, and communication patterns should all back up that claim.

The Growing Threat

Arup's loss represents just one known case. As AI technology improves and becomes more accessible, these attacks will likely increase in frequency and sophistication. The tools used to create convincing deepfakes are becoming cheaper and easier to use.

Traditional security measures like passwords, video calls, and even voice recognition are no longer sufficient when facing AI that can convincingly replicate human appearance and behavior.

The solution isn't to stop using video calls or trust technology less. It's to add new layers of verification that AI cannot fake.